Related Work

Brandon Yang, Gabriel Bender, Quoc V. Le, Jiquan Ngiam.

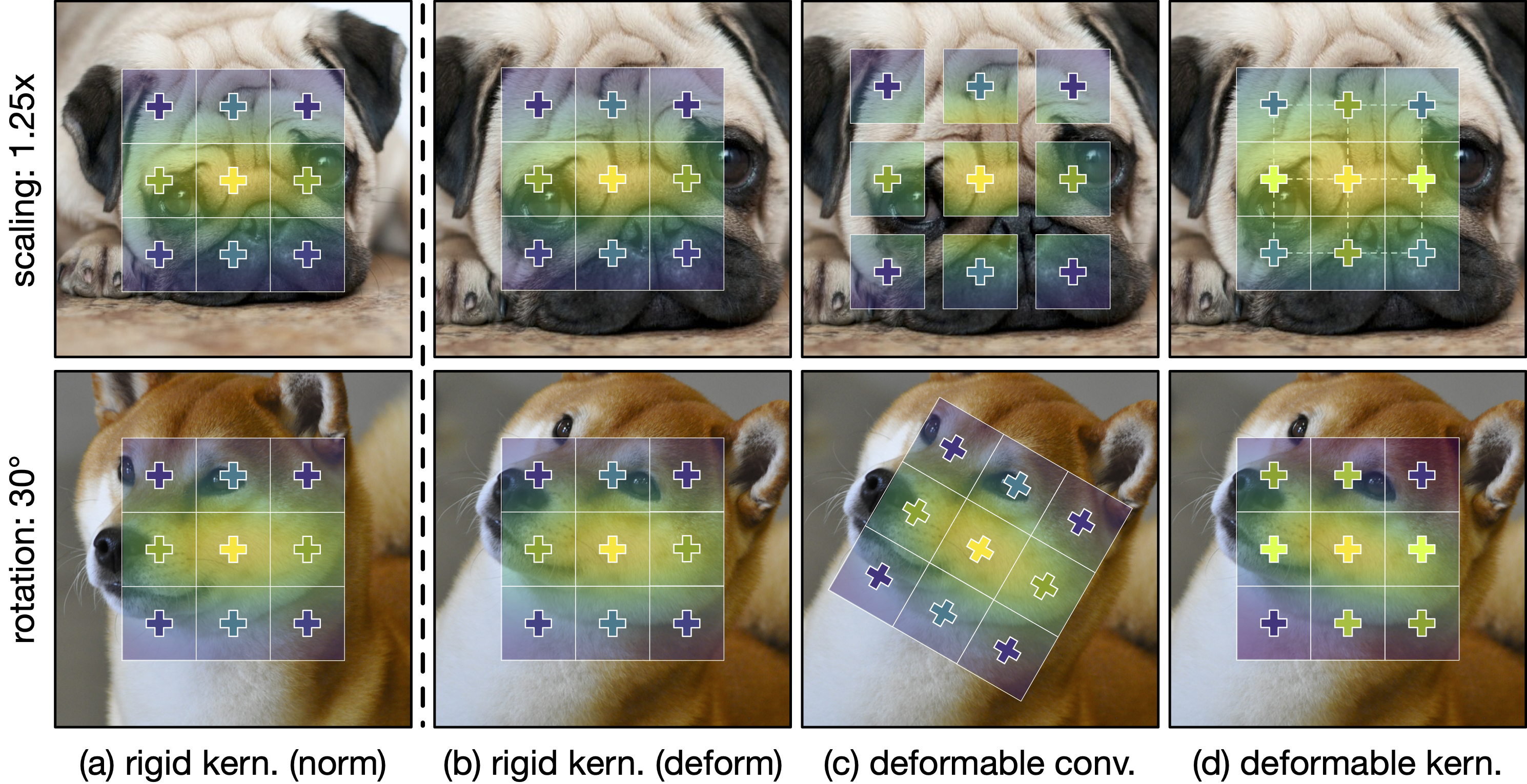

CondConv: Conditionally Parameterized Convolutions for Efficient

Inference.

In NeurIPS, Apr 2019.

[PDF]

[GitHub]

Xizhou Zhu, Han Hu, Stephen Lin, Jifeng Dai.

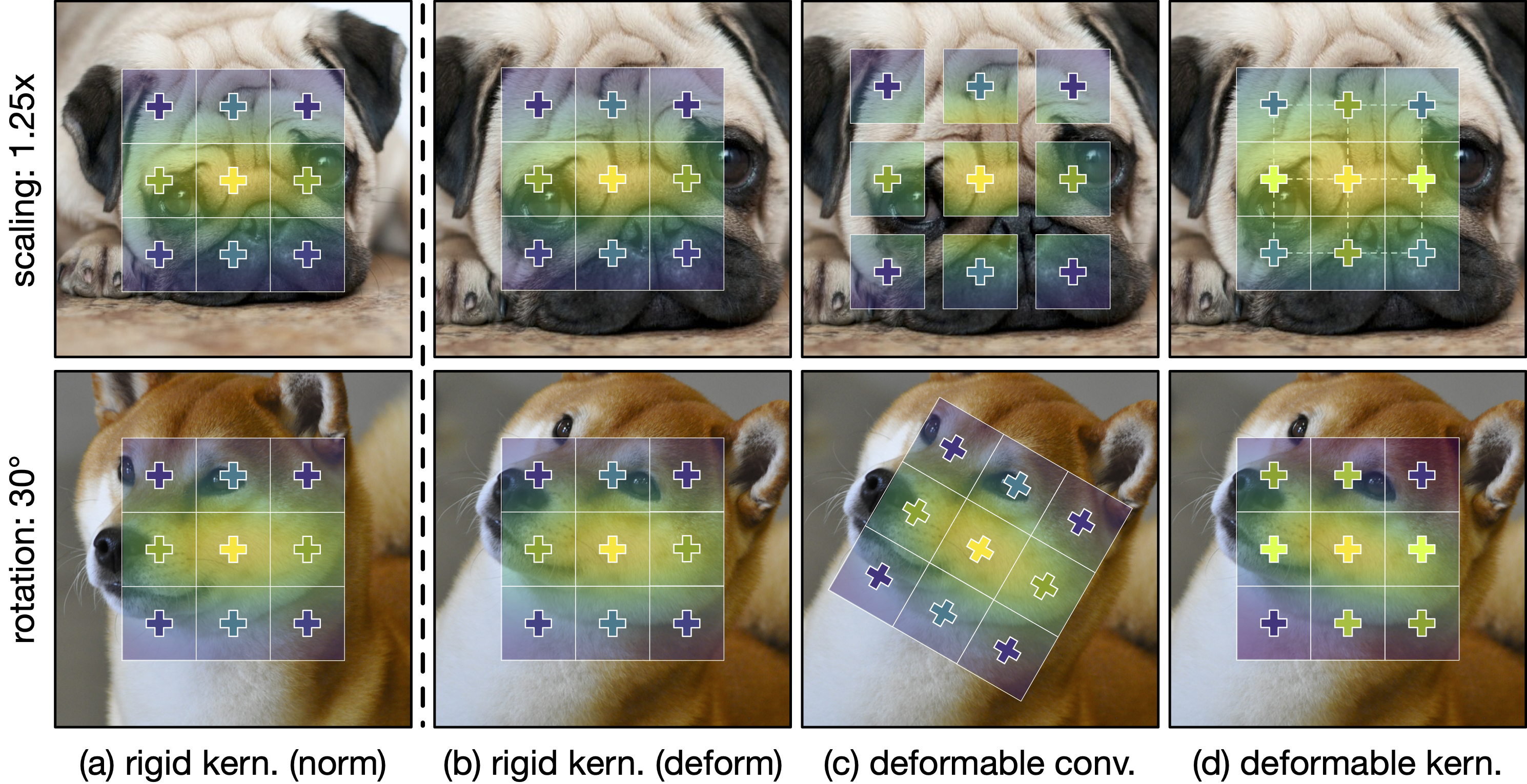

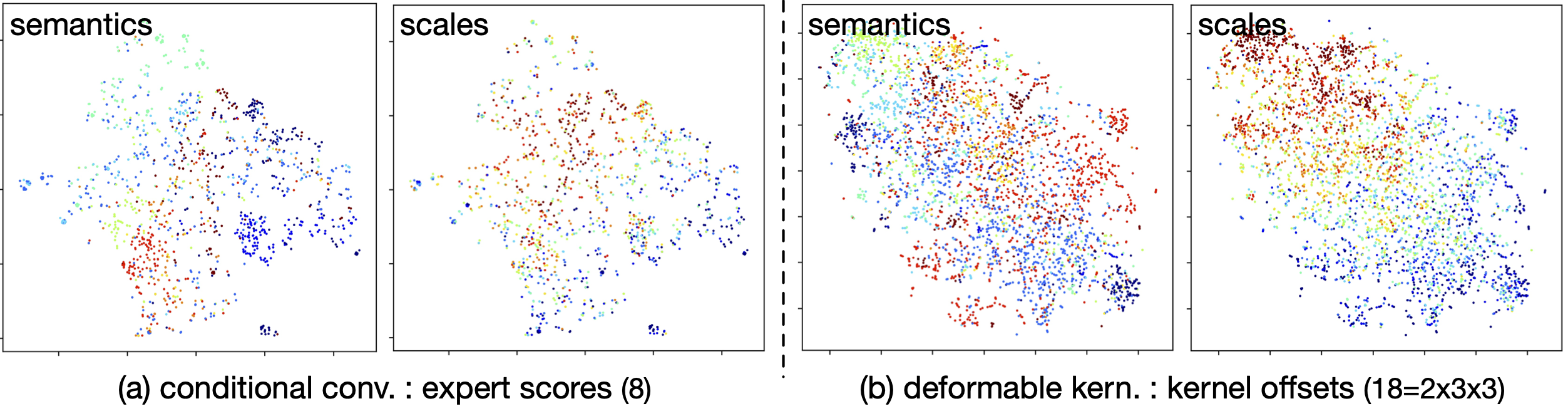

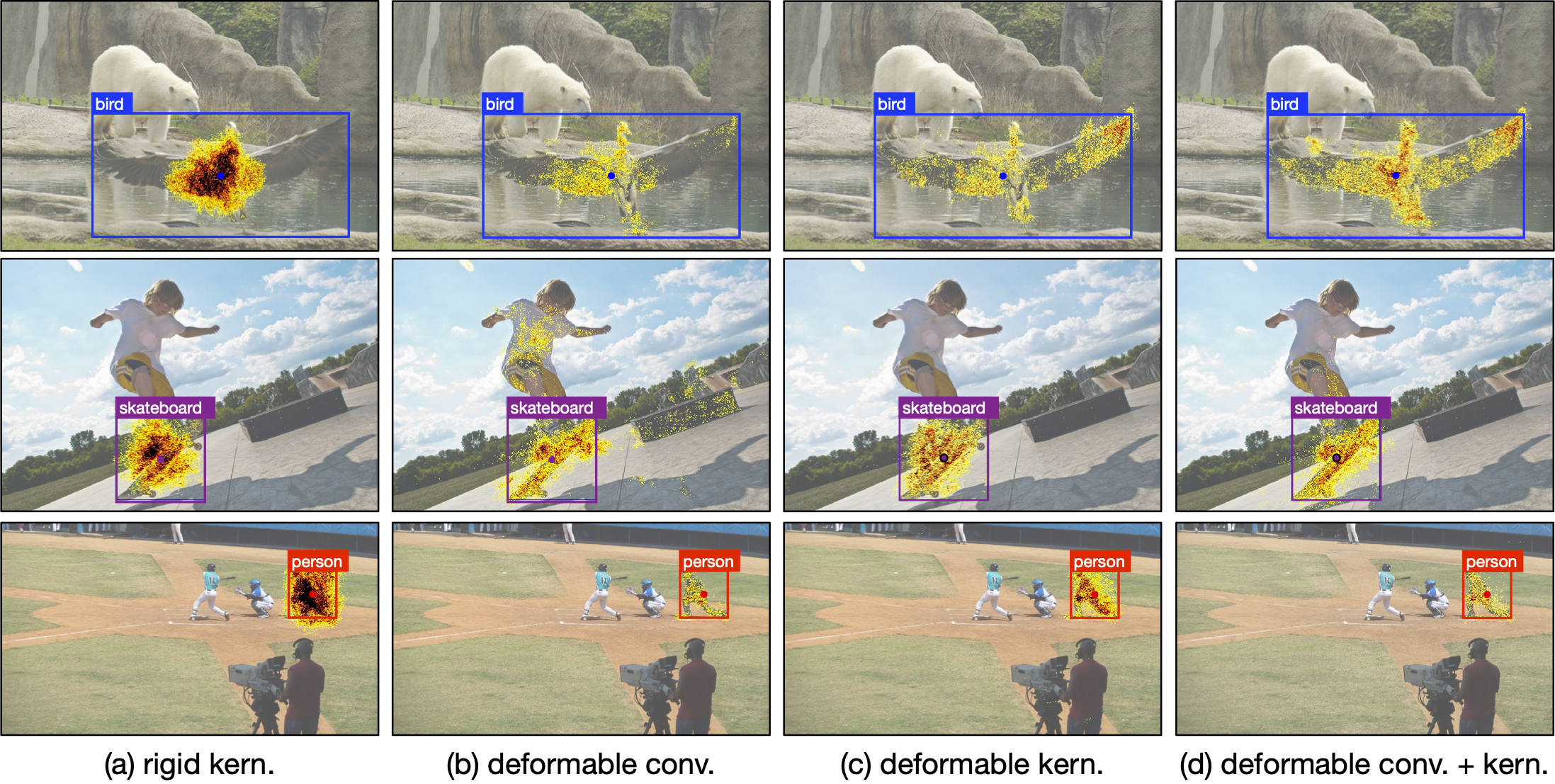

Deformable ConvNets V2: More Deformable, Better Results.

In CVPR, Nov 2018.

[PDF]

[GitHub]

Jifeng Dai*, Haozhi Qi*, Yuwen Xiong*, Yi Li*, Guodong Zhang*, Han

Hu, Yichen Wei.

Deformable Convolutional Networks.

In ICCV, Mar 2017.

[PDF]

[GitHub]

Wenjie Luo*, Yujia Li*, Raquel Urtasun, Richard Zemel.

Understanding the Effective Receptive Field in Deep Convolutional

Neural Networks

In NeurIPS, May 2016.

[PDF]

|